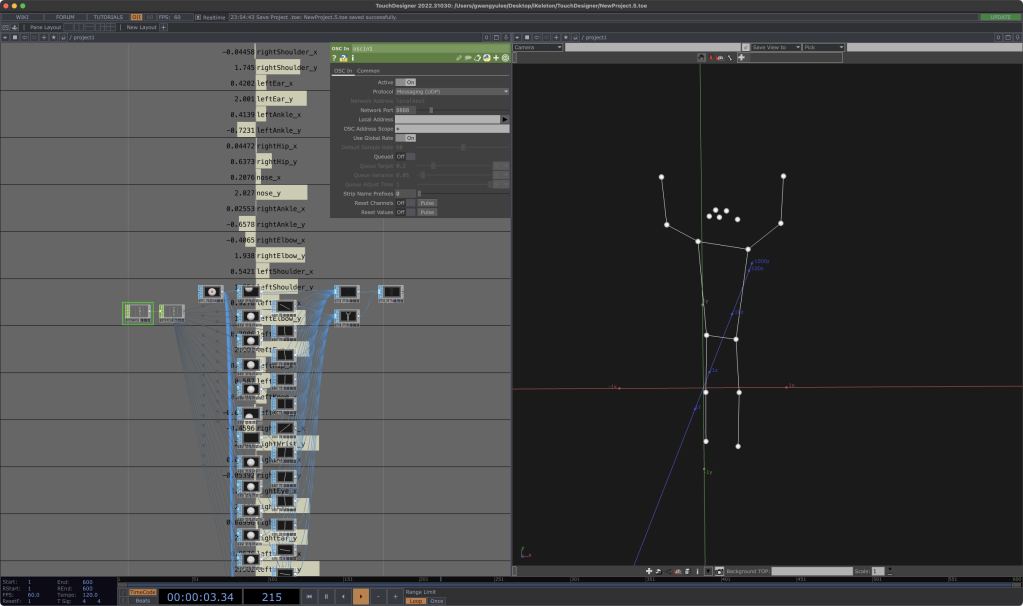

Developer/musician/media artist Gwangyu Lee has made an app for iPad and iPhone that captures skeleton data and sends it via OSC. It’s free this week, and includes a complete tutorial (for use with any tool) and a TouchDesigner example.

TouchDesigner just had its meetup last week so I expect we have a crop of news from them plus some streams, but while I dig through that, here’s a tip from them on this nice-looking app:

DOWNLOAD IKELETON OSC FOR FREE UNTIL MAY 5 [derivative.ca]

https://github.com/gwangyu-lee/iKeleton-OSC

This looks really nicely done – like maybe the easiest way to get at this data through Apple’s own APIs. (I’m playing with some other browser-based ways of doing this – I think one of the things about so-called “AI” that does genuinely excite me is how much better these approaches are at motion capture!)

You can use any tool that works with OSC – including Resolume, Max, and whatnot. But there is a particularly easy-to-use project file here for working with TouchDesigner, as pictured here.

Remember struggling with the first-generation Kinect, or really awful stuff with webcams? Ah, memories … I would … actually gladly leave behind. It’s beautiful how well this works now by contrast. On your damned phone. (The iPad native version is also useful, since it gives you a big view so you can see what you’re doing from a distance.)